In this article, we will implement an O(log N) Algorithm Example, and explore what O(log N) time complexity means. We will also discuss the advantages and disadvantages of using logarithmic algorithms and explain why they are an important tool in algorithm design. By the end of this article, you will have a better understanding of O(log N) algorithms. You can also learn more about O(n) and O(N²) algorithms in this article.

Common Algorithm Time Complexities

When dealing with algorithms, it is important to consider their time complexity. The time complexity of an algorithm refers to how its performance scales with the size of the input. Let’s see the most common algorithm time complexities in order from the fastest to the slowest:

- O(1) – constant time complexity. The algorithm takes the same amount of time to run, regardless of the size of the input.

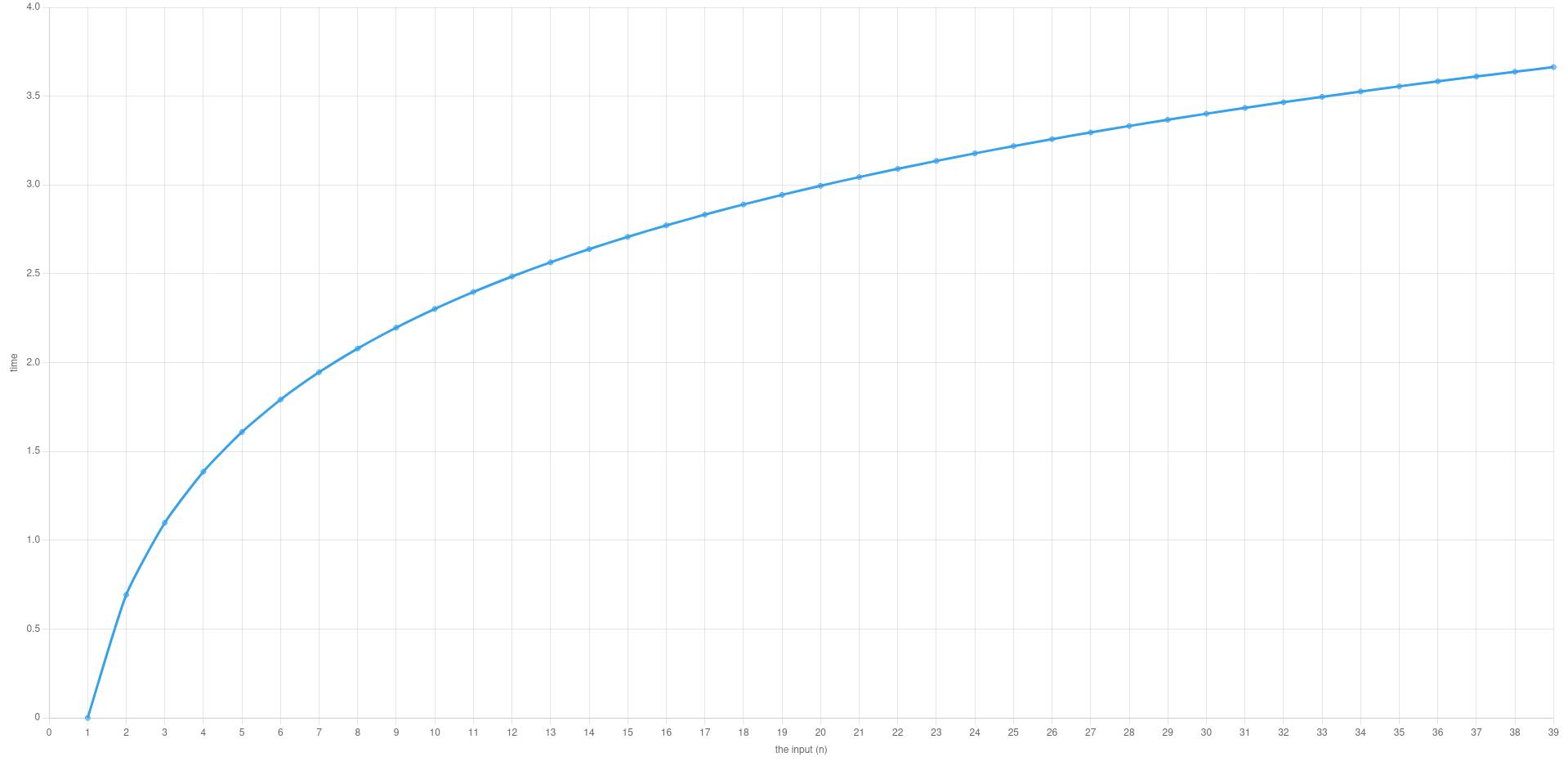

- O(log N) – logarithmic time complexity. The time required to run the algorithm increases logarithmically with the size of the input.

- O(N) – linear time complexity. The time required to run the algorithm increases linearly with the size of the input.

- O(N log N) – linearithmic time complexity. The time required to run the algorithm increases linearly with the size of the input, multiplied by the logarithm of the input size.

- O(N^2) – quadratic time complexity. The time required to run the algorithm increases quadratically with the size of the input.

- O(N^3) – cubic time complexity. The time required to run the algorithm increases cubically with the size of the input.

- O(2^N) – exponential time complexity. The time required to run the algorithm increases exponentially with the size of the input.

- O(N!) – factorial time complexity. The time required to run the algorithm increases factorially with the size of the input.

The actual running time of an algorithm can vary depending on the specific hardware and software used. Therefore, time complexity should be used as a theoretical measure of performance, rather than an absolute measure.

As you see, algorithms with the time complexity of O(log n) are of the fastest algorithms in the list. To measure your application’s performance you should also consider load-testing them. Read this article to learn more about load testing.

What is O(log N) Time Complexity?

O(log n) time complexity refers to an algorithm that takes time proportional to the logarithm of the input size, as the size of the input increases. More specifically, it means that the time required to solve the problem using this algorithm increases slowly as the size of the input increases.

The base of the logarithm is not important for big O notation, so O(log n) represents any logarithmic base, such as base 2 or base 10. An algorithm with O(log n) time complexity is generally considered efficient and fast, especially when compared to algorithms with higher time complexities like O(N) or O(N^2).

The most common example of an algorithm with O(log n) time complexity is the binary search algorithm, which can find a specific value in a sorted list of n items by successively dividing the list into half at each iteration. Other examples of O(log n) algorithms include various divide-and-conquer algorithms, such as the QuickSort algorithm and the MergeSort algorithm.

O(log n) time complexity is considered a desirable time complexity in many cases because it allows us to solve problems efficiently even when the input size is very large. In this article, we will discuss an example of an algorithm with this time complexity: finding the greatest common divisor (GCD) of two positive integers. Did you know that we offer a unique online course that boosts your C# career? Check it out here!

O(log N) Example: (GCD) Euclidean Algorithm

The greatest common divisor (GCD) of two positive integers a and b is the largest positive integer that divides both a and b without leaving a remainder. There are many methods to find the GCD of two integers, but one of the most efficient ones is the Euclidean algorithm. The Euclidean algorithm is a simple and recursive algorithm that relies on the fact that the GCD of two numbers is the same as the GCD of the smaller number and the remainder of the larger number divided by the smaller number.

Steps

Let’s take a closer look at the steps of the Euclidean algorithm:

- Ensure that

ais greater thanb. Ifbis greater thana, swap the values ofaandb. - Compute the remainder

rofadivided byb. - If

ris equal to0, then the GCD ofaandbisb. Otherwise, setatobandbtor, and go back to step 2.

The Euclidean algorithm has a time complexity of O(log N), where N is the larger of the two input integers. This is because, in each iteration of the algorithm, the size of the inputs is reduced by a factor of at least 2, which means that the number of iterations required is proportional to log N.

C# Implementation

Here’s an example implementation of the Euclidean algorithm in C#:

static int GCD(int a, int b)

{

// Ensure that a is greater than b

if (b > a)

{

int temp = a;

a = b;

b = temp;

}

// Compute GCD using the Euclidean algorithm

while (b > 0)

{

int r = a % b;

a = b;

b = r;

}

return a;

}

To use this function, you can call it with two integers as arguments, like this:

int gcd = GCD(24, 36);

Console.WriteLine("The GCD of 24 and 36 is: " + gcd);

And the output is:

The GCD of 24 and 36 is: 12

In this example, the function takes two integers as input and then uses the Euclidean algorithm to compute the GCD of these two integers. The function returns the GCD as an integer.

Other Algorithms With the O(log N) Time Complexity

Let’s see some examples of algorithms with a time complexity of O(log N).

- Binary search: This is a search algorithm that works by repeatedly dividing a sorted array in half until the target element is found, or until it is clear that the target element is not in the array. The time complexity of binary search is O(log N).

- Finding the exponentiation: Given a base and an exponent, the algorithm computes the result by repeatedly squaring the base and dividing the exponent by two until the exponent is zero. The time complexity of this algorithm is also O(log N).

- Balanced binary search tree operations: Insertion, deletion, and search operations in balanced binary search trees, such as AVL trees and Red-Black trees, all have a time complexity of O(log N).

- Finding the greatest common divisor (GCD): Given two positive integers a and b, the algorithm computes the GCD by repeatedly taking the remainder of the larger number divided by the smaller number, until the smaller number is zero. The time complexity of this algorithm is also O(log N).

- Finding the minimum/maximum element in a heap: Heaps are data structures that maintain the property that the root node has either the minimum or maximum value in the tree. The time complexity of finding the minimum or maximum element in a heap is O(log N).

Advantages

- Efficient: Algorithms with O(log N) time complexity are generally very efficient and can solve problems quickly, even as the input size grows larger.

- Scalable: Logarithmic algorithms are highly scalable, meaning that they can handle large input sizes with ease.

Disadvantages

- Limited use cases: Not all problems can be solved using logarithmic algorithms. In some cases, algorithms with higher time complexities like O(N) or O(N^2) may be necessary.

- Limited flexibility: Logarithmic algorithms often require the input to be sorted or meet other specific requirements, which can limit their flexibility in certain situations.

- High implementation complexity: Some logarithmic algorithms can be difficult to implement, requiring a deep understanding of mathematical concepts like logarithms and recursive functions.

You may also want to learn more about stacks, queues, and binary trees.

Conclusion

Finding the greatest common divisor of two positive integers is a common problem in mathematics and computer science. The Euclidean algorithm is an efficient algorithm that can be used to find the GCD of two integers, with a time complexity of O(log N). By understanding the time complexity of an algorithm and choosing the right one for a specific problem, we can improve the efficiency and performance of our programs. If you want to skyrocket your C# career, check out our powerful ASP.NET full-stack web development course that also covers test-driven development.